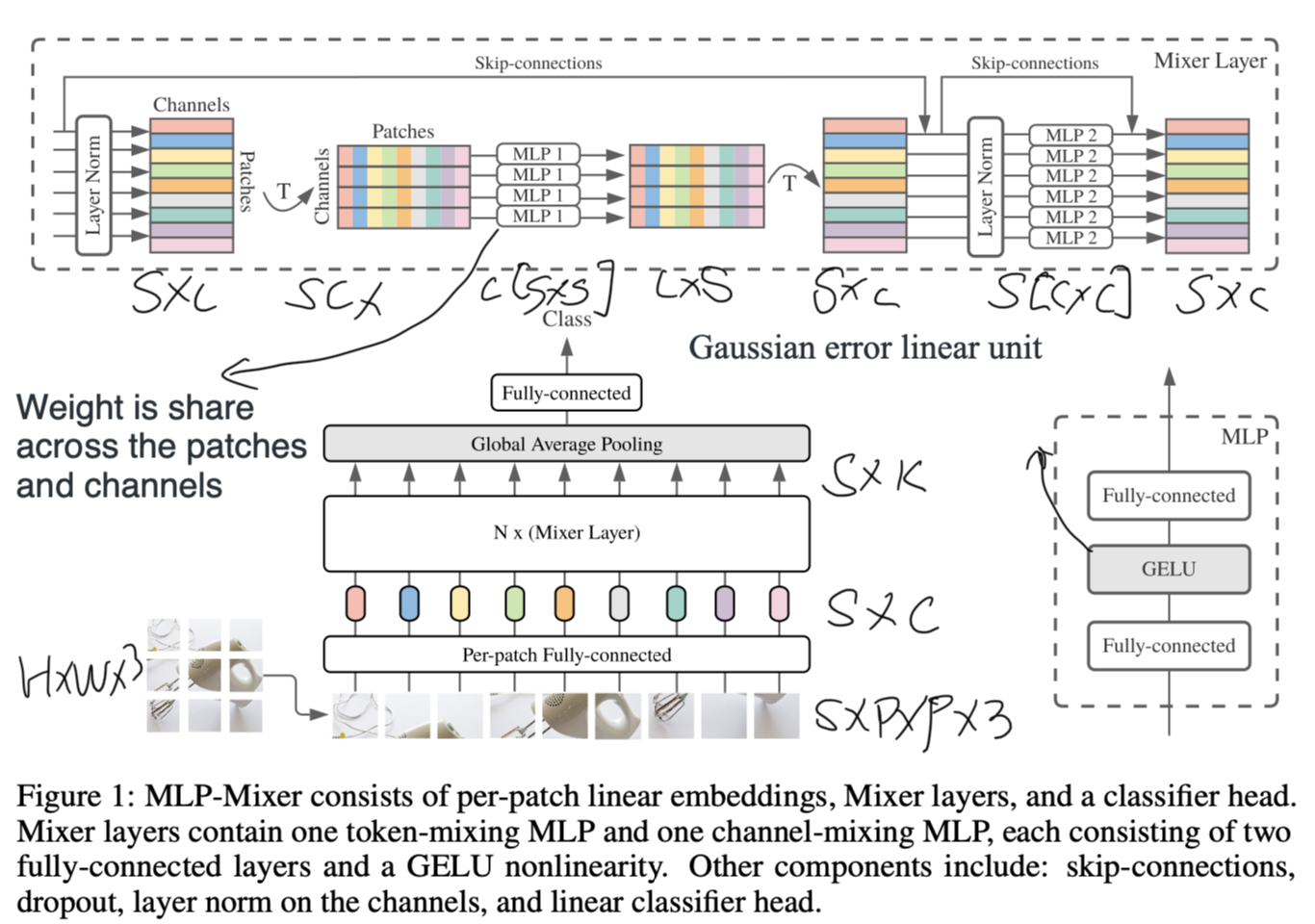

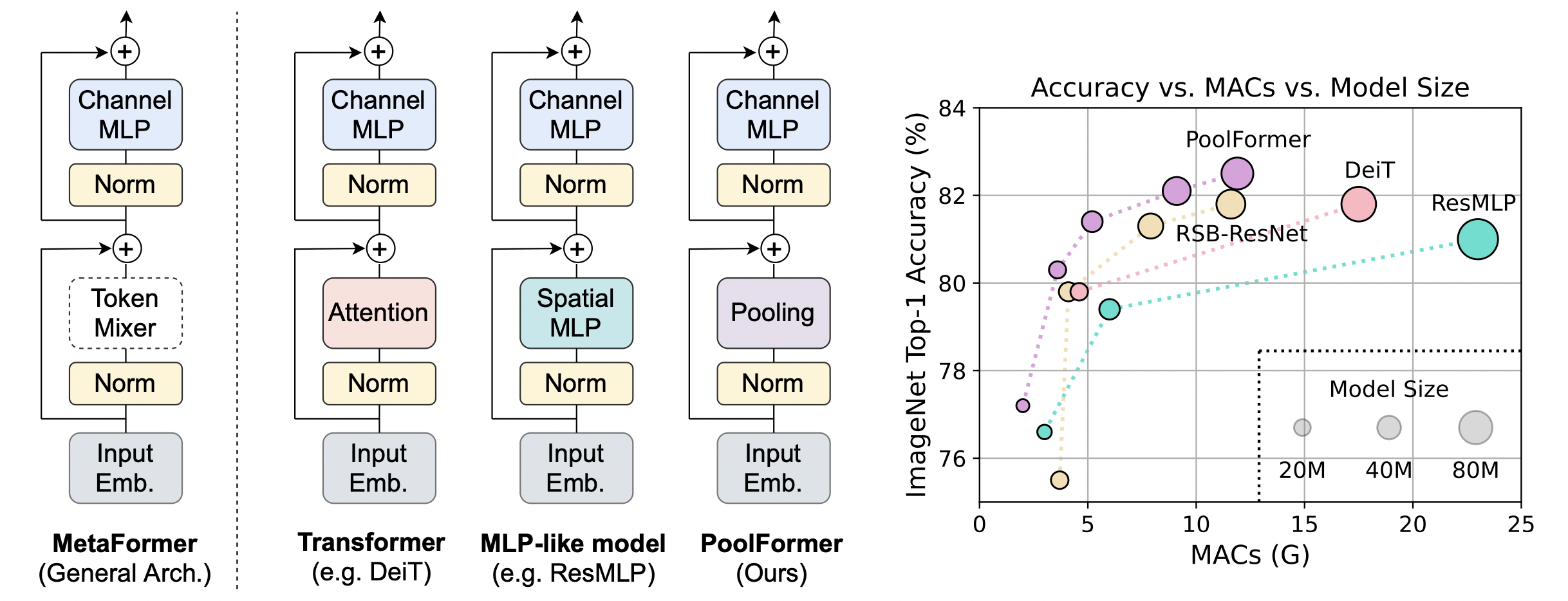

akira on X: "https://t.co/Ee3uoMJeQQ They have shown that even if we separate the token mixing part of the Transformer into the token mixing part and the MLP part and replace the token

![PDF] Exploring Corruption Robustness: Inductive Biases in Vision Transformers and MLP-Mixers | Semantic Scholar PDF] Exploring Corruption Robustness: Inductive Biases in Vision Transformers and MLP-Mixers | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/b145a25df2457bedfddeecb4be37828e43f6cc80/7-Figure1-1.png)

PDF] Exploring Corruption Robustness: Inductive Biases in Vision Transformers and MLP-Mixers | Semantic Scholar

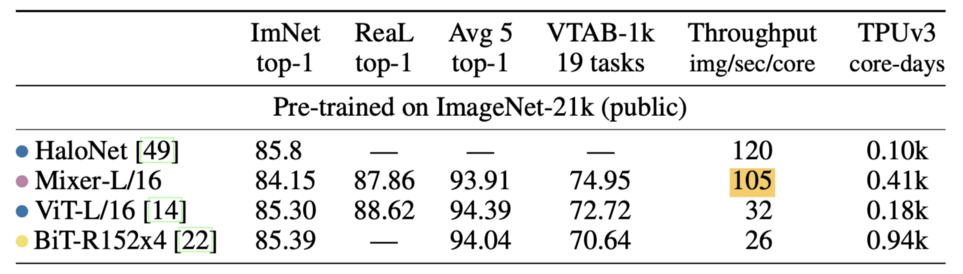

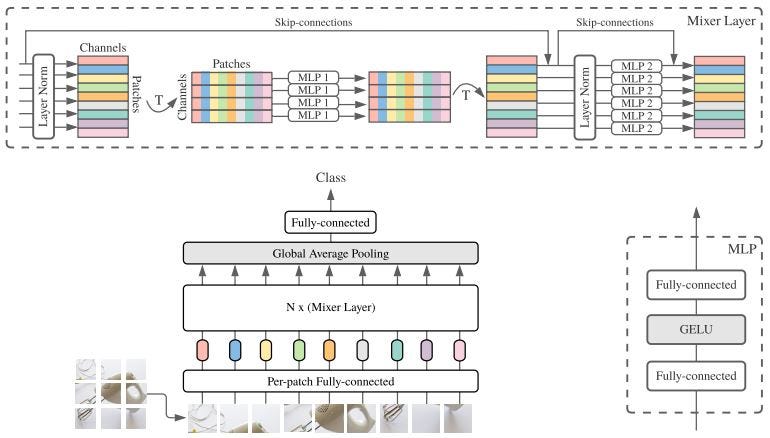

![PDF] MLP-Mixer: An all-MLP Architecture for Vision | Semantic Scholar PDF] MLP-Mixer: An all-MLP Architecture for Vision | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/3d4a76a06e771ae450494b60522eab9105709024/6-Figure3-1.png)

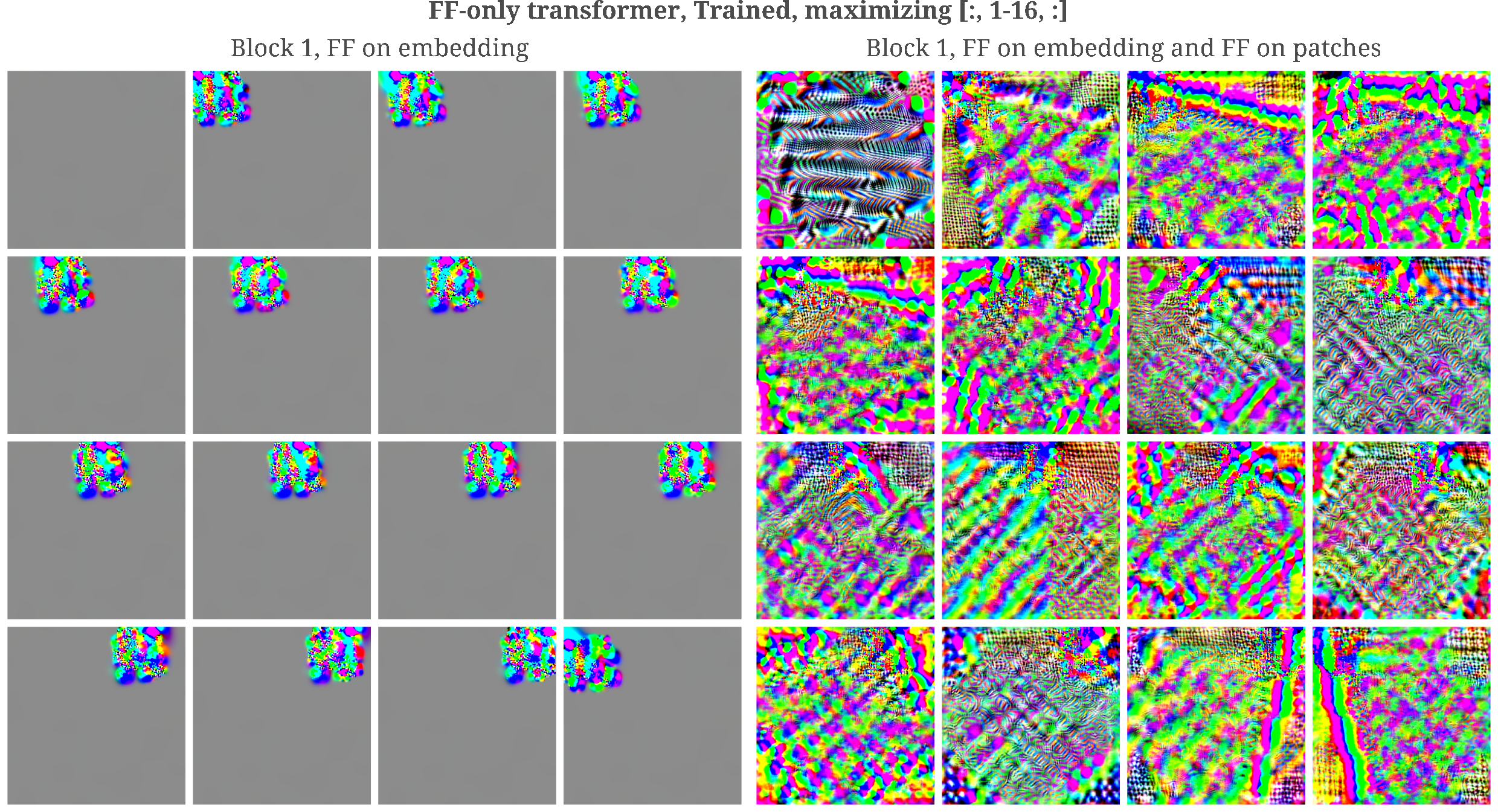

![PDF] AS-MLP: An Axial Shifted MLP Architecture for Vision | Semantic Scholar PDF] AS-MLP: An Axial Shifted MLP Architecture for Vision | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/71363797140647ebb3f540584de0a8758d2f7aa2/5-Figure3-1.png)

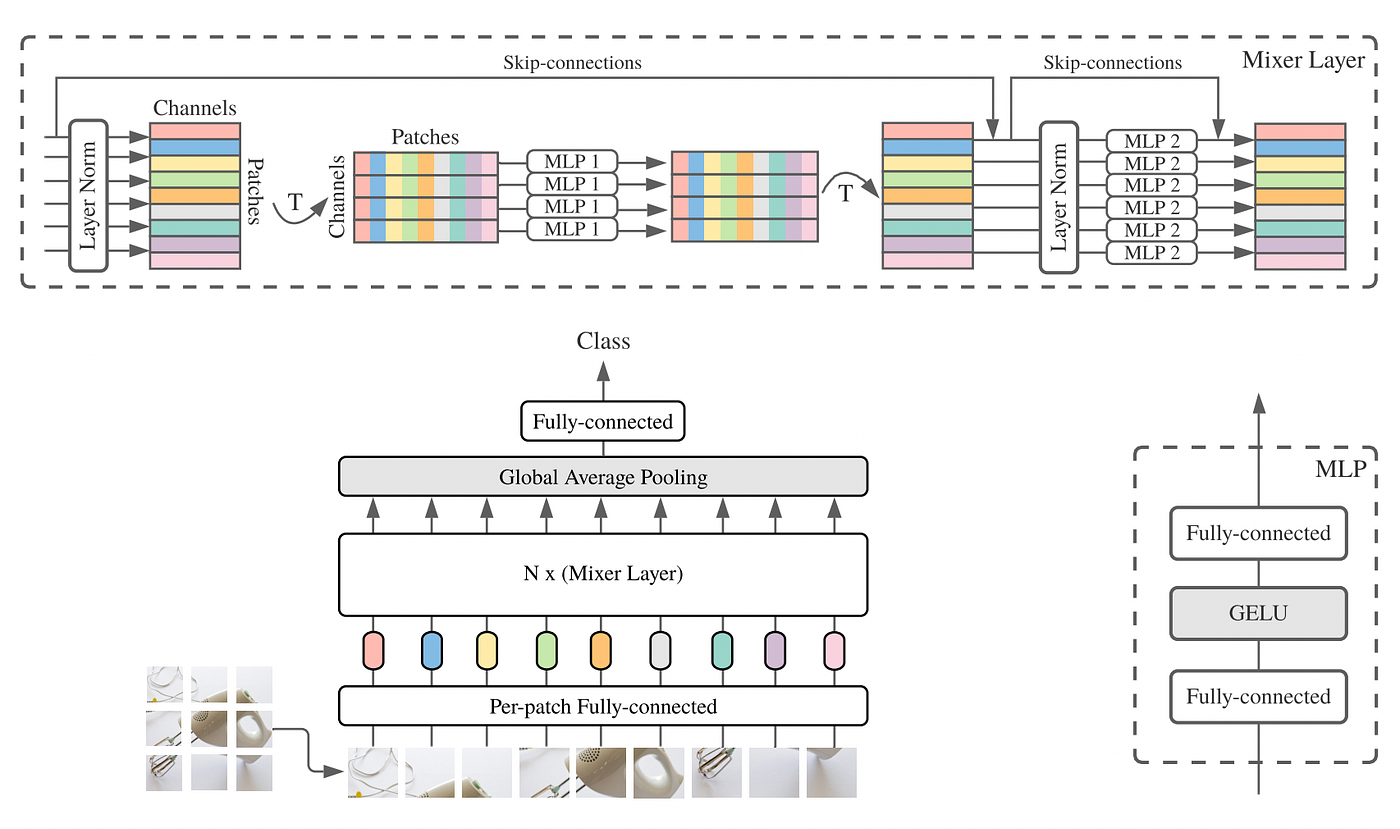

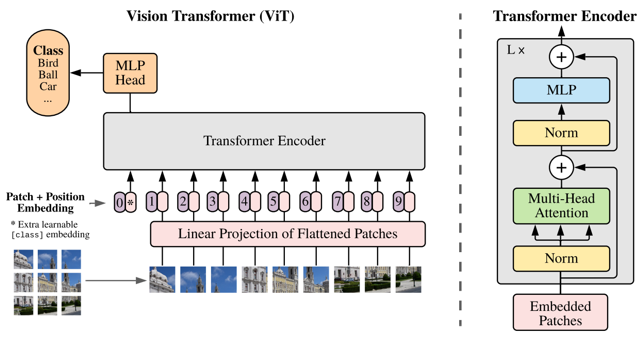

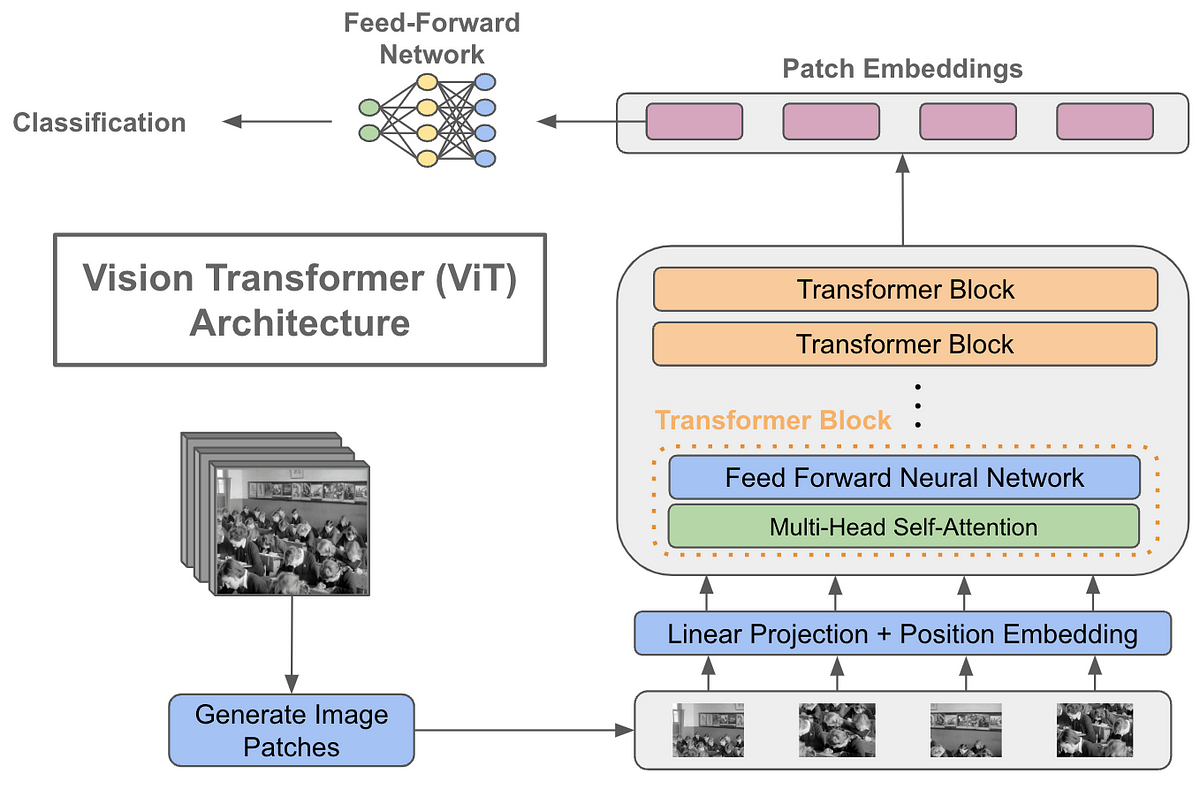

![Vision Transformer: What It Is & How It Works [2023 Guide] Vision Transformer: What It Is & How It Works [2023 Guide]](https://assets-global.website-files.com/5d7b77b063a9066d83e1209c/639b1ed4da71962b47ba4e60_IN%20TEXT%20ASSET-2.webp)